The Strategic Guide to AI Implementation in Healthcare

Introduction

Artificial intelligence is reshaping healthcare by supporting better decisions and smarter processes. Hospitals now use AI for scheduling, billing, diagnostics, and patient monitoring. It is no longer just a future idea. It is already part of daily operations.

But implementing AI in healthcare is not as simple as installing new software. Healthcare systems are complex. They manage sensitive patient data, strict regulations, and critical medical decisions. Without a clear plan, AI projects can become expensive, confusing, or difficult for staff to adopt.

Many organizations move too quickly into advanced AI tools without preparing their systems or data. When the foundation is weak, results suffer. That is why AI must be implemented step by step, with structure and governance.

In this article, we will explain a practical 3-layer framework for AI implementation in healthcare. We will explore how organizations can begin with administrative automation, move into diagnostics and operational intelligence, and responsibly adopt Clinical Decision Support Systems. We will also discuss ethics, governance, leadership priorities, and realworld AI use cases.

The Evolving Role of AI in Modern Healthcare

AI in healthcare has evolved quickly over the past decade. In the beginning, most AI tools focused on basic automation. They helped hospitals reduce paperwork, organize patient data, and improve scheduling systems.

Today, AI plays a much bigger role.

Modern healthcare organizations use AI to analyze imaging results, detect patterns in lab reports, predict patient admission rates, and even assist in treatment planning. AI systems can process large amounts of data in seconds, something that would take humans hours or even days.

This shift has changed how healthcare leaders think about technology.

AI is no longer just an operational tool. It is becoming a strategic asset.

For example, predictive models can help hospitals prepare for seasonal patient surges.

Risk scoring systems can identify patients who may need early intervention. Financial analytics platforms can detect revenue leakage before it becomes a larger problem.

At the same time, expectations are growing. Patients expect faster service, better communication, and personalized care. AI helps healthcare providers meet these expectations without increasing workload.

However, as AI becomes more powerful, it also becomes more complex. The more it influences diagnostics and clinical decisions, the more important governance, ethics, and validation become.

This is why understanding the evolving role of AI is critical. It is not just about technology adoption. It is about reshaping workflows, decision-making processes, and leadership strategies.

Healthcare organizations that understand this shift will implement AI with clarity and control. Those that do not may struggle with scattered tools and unclear outcomes.

AI is not just improving healthcare operations. It is redefining how healthcare systems function.

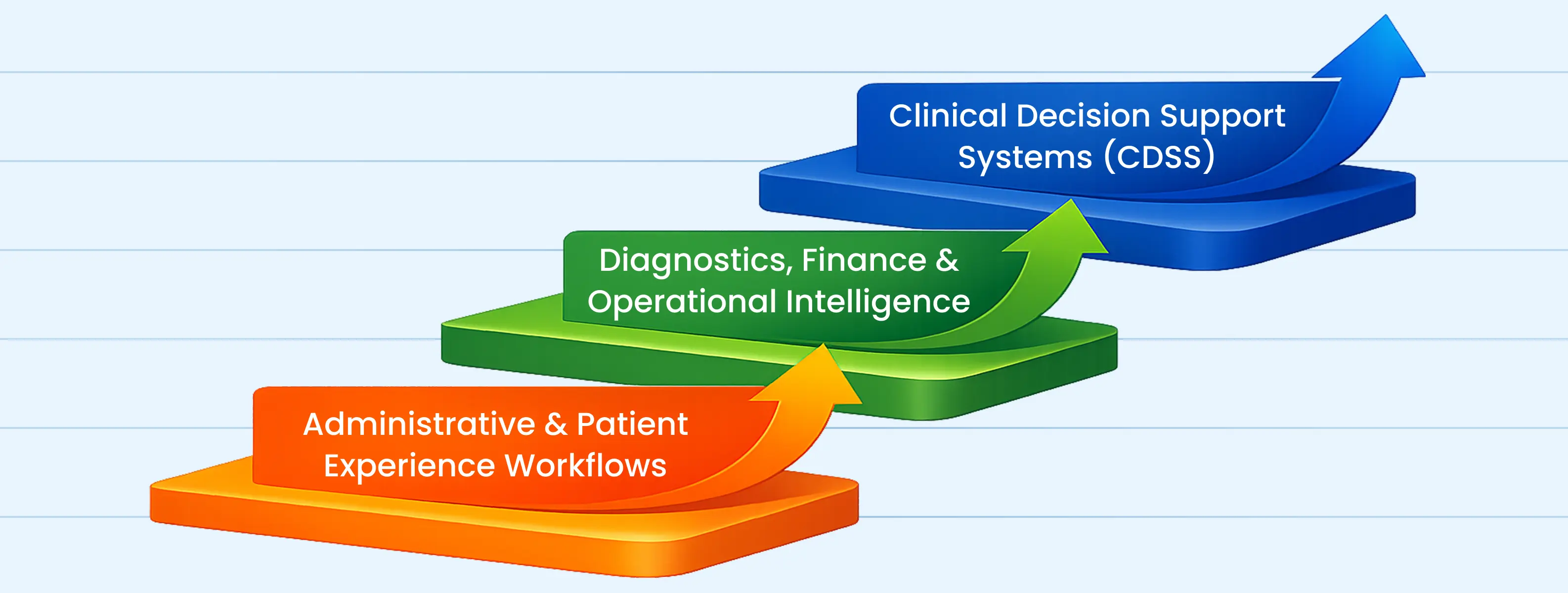

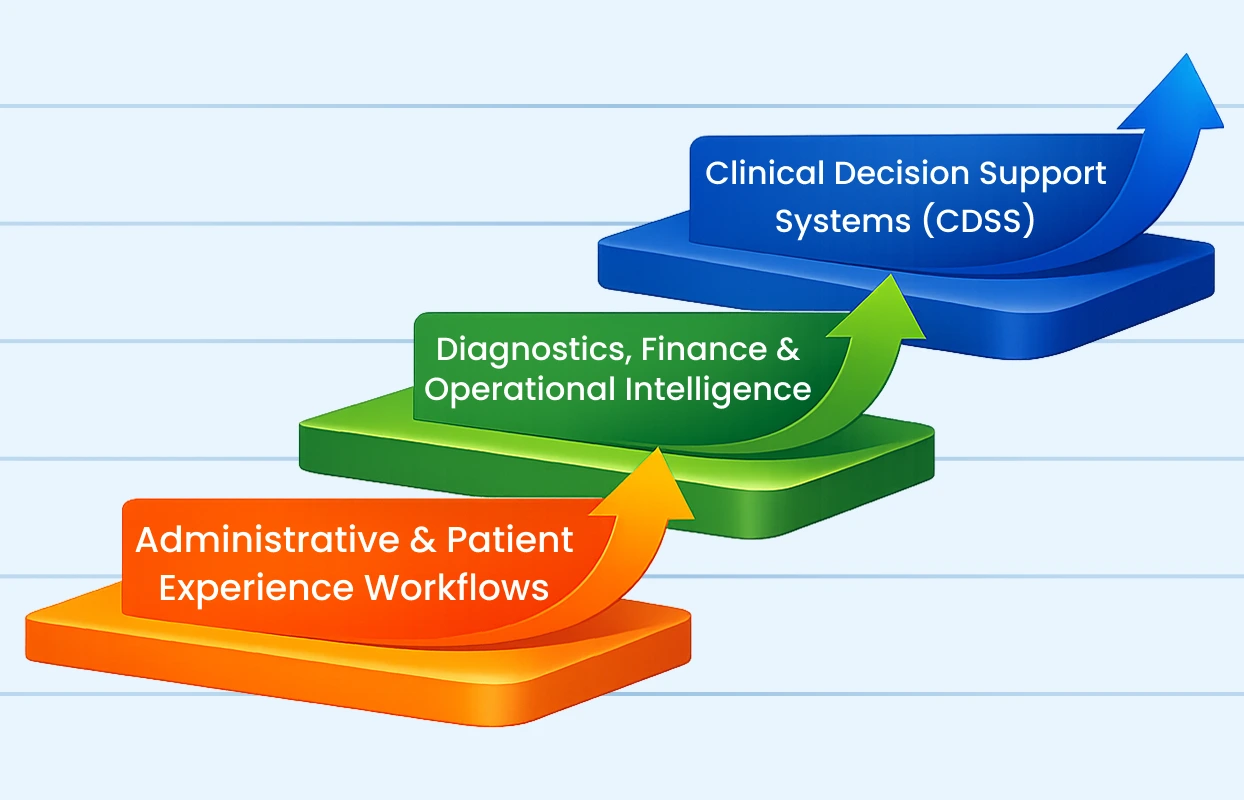

The 3-Layer Framework for AI Implementation

Implementing AI in healthcare should not feel overwhelming. The key is to move in stages instead of trying to transform everything at once.

A structured 3-layer framework helps healthcare organizations adopt AI safely, build internal confidence, and reduce risk. Each layer strengthens the foundation for the next.

The three layers are:

- Layer 1 focuses on administrative and patient experience workflows.

- Layer 2 expands into diagnostics, finance, and operational intelligence.

- Layer 3 introduces Clinical Decision Support Systems (CDSS).

These layers are not isolated. They are connected. Success in one layer supports progress in the next.

In the first layer, the goal is stability and efficiency. Hospitals improve scheduling, billing accuracy, documentation, and patient communication. These improvements are practical and measurable. They reduce manual workload and create early wins.

In the second layer, AI begins to generate deeper insight. It analyzes diagnostic data, predicts operational trends, and strengthens financial management. At this stage, organizations start using AI not just to automate tasks, but to guide planning and performance.

The third layer is the most advanced. Clinical Decision Support Systems assist physicians by analyzing patient history, lab data, and treatment guidelines to provide evidence-based recommendations. This layer directly supports clinical decision-making, which means it requires stronger governance, validation, and oversight.

The purpose of this layered approach is simple. It prevents rushed implementation. It ensures data is clean before advanced systems are introduced. It builds trust among staff. And it allows leadership to measure value at every stage.

AI implementation works best when it grows with the organization, not ahead of it.

In the next sections, we will explore each layer in detail, beginning with administrative and patient experience workflows.

Layer 1: Administrative and Patient Experience Workflows

Layer 2: Diagnostics, Finance, and System Intelligence

After building a stable base in administrative workflows, healthcare organizations can move to the second layer of AI implementation. At this stage, AI begins to support deeper analysis across diagnostics, financial management, and hospital planning.

In diagnostics, AI tools can review imaging scans, lab reports, and patient records to detect patterns that may need closer attention. For example, an AI system can highlight unusual areas in radiology images or identify abnormal lab value trends over time. These tools do not replace doctors. They help organize large amounts of information and bring important details forward so clinicians can review them carefully.

In financial management, AI can analyze billing history, track revenue cycles, and flag claims that may face rejection. By learning from past data, the system can identify common errors before submission. This supports smoother payment processes and better financial control.

Hospital planning also becomes more data-driven at this stage. AI can forecast patient admission trends, anticipate busy periods in emergency departments, and suggest staffing adjustments. It can also review bed usage patterns and medical supply consumption to help leadership plan resources wisely.

Layer 2 moves beyond basic automation. It focuses on turning healthcare data into useful insights that guide everyday decisions across departments.

This stage requires organized data and better coordination between systems. When information is accurate and consistent, AI tools produce more reliable results.

Layer 3: Clinical Decision Support Systems (CDSS)

The third layer of AI implementation focuses on Clinical Decision Support Systems, often called CDSS. This is the stage where AI directly supports doctors and care teams during clinical decision-making.

At this level, AI systems analyze patient data in real time. This may include medical history, lab results, imaging reports, medication records, and even data from wearable devices. The system then provides evidence-based suggestions, risk alerts, or treatment guidance based on recognized clinical patterns.

It is important to understand that CDSS does not replace doctors. It supports them. Final decisions always remain with licensed medical professionals. AI acts as a second set of eyes, helping reduce oversight and bringing attention to possible risks earlier.

For example, a CDSS tool can alert a physician if a prescribed medication may interact negatively with another drug the patient is already taking. It can identify early signs of sepsis based on subtle changes in vital signs. It can also suggest guideline-based treatment pathways for chronic diseases such as diabetes or heart conditions.

Because this layer directly influences patient care, it requires careful planning.

Healthcare organizations should introduce CDSS only after:

- Data systems are accurate and integrated

- Clinical workflows are clearly defined

- Governance policies are in place

- Staff are trained to interpret AI recommendations

Trust is essential at this stage. Clinicians must understand how the system generates recommendations. Transparency and explainability are critical. If users do not trust the output, they will ignore it.

CDSS should be introduced in phases. Start with one department or one use case. Monitor outcomes. Collect feedback from physicians. Refine the system before expanding it across the organization.

When implemented responsibly, CDSS can improve patient safety, support early detection of serious conditions, and help standardize care delivery across teams.

Checkpoints for Layer 3 Adoption

Before expanding Clinical Decision Support Systems, healthcare leaders should review several checkpoints:

First, data integrity must be verified. Incomplete or inconsistent records can lead to incorrect alerts.

Second, clinical validation is required. AI models should be tested against real-world patient cases to confirm reliability.

Third, clear accountability must be defined. Everyone should understand that AI provides recommendations, not final decisions.

Fourth, documentation processes must record when AI suggestions are accepted or overridden. This supports transparency and quality improvement.

Fifth, ongoing monitoring should be established. AI systems must be reviewed regularly to ensure they continue to perform safely over time.

These checkpoints protect both patients and providers.

Ethics and Governance in AI

As AI becomes more involved in healthcare decisions, ethics and governance can no longer be optional. They must be built into every layer from the beginning.

Healthcare deals with human lives. Every recommendation, alert, or prediction produced by AI has real consequences. That is why organizations must create clear rules about how AI systems are selected, tested, monitored, and used.

The first priority is patient privacy. AI systems rely on large amounts of data. Hospitals must ensure that patient information is protected under data protection laws and internal security standards. Access to sensitive information should be limited, tracked, and regularly reviewed.

The second priority is fairness. AI models learn from historical data. If that data contains bias, the system may produce unfair recommendations. For example, certain populations may be underrepresented in training data. This can lead to unequal risk predictions or treatment suggestions. Regular audits help identify and correct these issues.

Transparency is equally important. Clinicians should understand how an AI system reaches its conclusions. If a system provides a risk score, doctors should be able to see the factors behind that score. Clear explanations build trust and improve adoption.

Governance also requires defined responsibility. Every AI system should have:

- A clinical sponsor

- A technical owner

- A compliance reviewer

- A monitoring team

This ensures that no tool operates without oversight.

Hospitals should also establish an AI review committee. This committee can evaluate new AI proposals, assess risk, approve pilots, and review performance reports. Structured governance prevents uncontrolled expansion of tools that may not align with clinical goals.

Finally, AI systems should never operate without human supervision. There must always be a clear path for override. If a clinician disagrees with an AI suggestion, their judgment must take priority.

Ethics and governance are not barriers to innovation. They create safe boundaries that allow innovation to grow responsibly.

AI in Action: Real-World Use Cases in Healthcare

Measuring ROI and Performance Outcomes

Implementing AI in healthcare is not just a technology upgrade. It is a strategic investment. For that reason, organizations must clearly define how they will evaluate return on investment (ROI) and overall performance outcomes.

ROI in healthcare AI should not be measured only in financial terms. While revenue improvement and cost control are important, the real value of AI also includes patient safety, time savings, workflow improvement, and better clinical insight.

The first step in measuring ROI is setting clear goals before deployment. For example, if AI is introduced in appointment scheduling, the goal might be reducing missed appointments. If deployed in diagnostics, the goal may be earlier detection of high-risk cases. Without defined objectives, it becomes difficult to measure impact.

Financial indicators are one part of the equation. Organizations can evaluate reduced claim denials, fewer billing corrections, lower overtime costs, or improved resource utilization. These outcomes directly affect financial stability.

Clinical outcomes are equally important. AI systems that support early detection, medication safety alerts, or risk stratification should be assessed based on patient safety indicators. This may include reduced adverse events, faster intervention times, or improved treatment adherence.

Productivity and workflow outcomes should also be tracked. For instance, has documentation time decreased? Are administrative teams handling fewer repetitive tasks? Are physicians able to review patient information more quickly? Time-based performance metrics help determine whether AI is truly supporting daily operations.

Patient experience is another key measurement area. Feedback surveys, response times, and appointment access improvements can reflect the impact of AI-driven communication tools.

It is also important to monitor adoption rates. If staff are not using the AI system regularly, the expected benefits will not materialize. Usage analytics, feedback sessions, and training reinforcement can improve engagement.

ROI measurement should be ongoing. AI systems evolve, and healthcare environments change. Regular reviews allow leadership to adjust strategies, refine models, and expand successful use cases.

Healthcare organizations that measure performance thoughtfully gain more than financial returns. They gain insight into how technology supports their mission of delivering safe, high-quality care.

What Should Next-Gen Hospital Leaders Do?

AI adoption in healthcare is not only a technology decision. It is a leadership decision. The direction, pace, and success of AI implementation depend largely on how hospital leaders think and act.

Next-generation leaders must first develop a clear vision. AI should not be adopted simply because it is trending. Leaders need to define what problems they want to solve. Is the goal to reduce medical errors? Improve patient access? Strengthen financial stability? A clear purpose guides better technology choices.

Second, leaders must invest in data readiness. AI depends on clean, structured, and accessible data. This means reviewing electronic health records, standardizing documentation practices, and ensuring systems can communicate with each other. Without reliable data, even the most advanced AI tool will fail.

Third, leadership must support cultural change. Staff may fear that AI will replace their roles or increase complexity. Leaders should communicate openly, explain the purpose of each system, and provide proper training. When employees understand how AI supports their work, resistance decreases.

Fourth, leaders should start small and scale gradually. Pilot programs allow organizations to test AI in controlled settings. Lessons learned from small deployments reduce risk during broader expansion.

Fourth, leaders should start small and scale gradually. Pilot programs allow organizations to test AI in controlled settings. Lessons learned from small deployments reduce risk during broader expansion.

Finally, leaders must commit to continuous monitoring. AI systems evolve. Clinical guidelines change. Data patterns shift. Regular review ensures that AI tools remain aligned with medical standards and organizational goals.

The most successful hospital leaders will not chase technology blindly. They will combine innovation with discipline, curiosity with caution, and ambition with responsibility.

Common Challenges in AI Implementation

AI in healthcare offers strong potential, but the path to implementation is not always smooth. Many organizations face practical and cultural barriers that slow progress or create confusion. Understanding these challenges early helps leaders prepare better solutions.

One of the most common challenges is poor data quality. Healthcare data is often incomplete, inconsistent, or stored in disconnected systems. Duplicate patient records, missing lab values, and inconsistent coding formats can reduce the accuracy of AI outputs. If the input data is weak, the results will also be weak.

Another challenge is system integration. Hospitals typically use multiple platforms for electronic health records, imaging, pharmacy, billing, and scheduling. When these systems do not communicate effectively, AI tools cannot access a complete view of patient information. Integration requires time, technical planning, and collaboration between vendors.

Resistance from staff is also common. Doctors, nurses, and administrative teams may worry that AI will increase their workload or reduce their professional autonomy. Some may distrust algorithm-based recommendations. Without proper communication and training, adoption rates can remain low.

Cost concerns can create hesitation as well. While AI can provide long-term value, initial investments in infrastructure, licensing, cybersecurity, and training may feel overwhelming. Leaders must plan budgets carefully and prioritize phased implementation rather than attempting full transformation at once.

Regulatory and compliance uncertainty presents another barrier. Healthcare organizations must ensure that AI systems comply with local health regulations, data protection laws, and medical device standards. This requires legal oversight and careful documentation.

Bias in AI models is another serious concern. If algorithms are trained on limited or unbalanced data, they may produce inaccurate predictions for certain populations. Regular audits and validation processes are necessary to reduce this risk.

There is also the challenge of overexpectation. Some organizations expect AI to deliver instant results. In reality, AI requires testing, refinement, and continuous monitoring. Unrealistic expectations can lead to disappointment and premature abandonment of promising tools.

Finally, governance gaps can create confusion. If there is no clear ownership of AI systems, accountability becomes unclear. Every AI tool should have defined clinical oversight, technical management, and compliance review.

These challenges are real, but they are manageable. With careful planning, clear communication, strong data preparation, and responsible leadership, healthcare organizations can navigate these barriers and build sustainable AI programs.

The Future of AI in Healthcare

Conclusion

Artificial intelligence is changing healthcare, but real progress does not come from rushing into complex systems. It comes from building the right foundation and moving forward step by step.

A layered approach makes AI practical and manageable. It begins with administrative and patient experience workflows. It then expands into diagnostics, finance, and system intelligence. Finally, it reaches clinical decision support, where AI directly assists doctors in patient care.

Each stage requires preparation. Data must be organized. Teams must be trained. Governance must be clearly defined. Ethics must guide every decision.

Healthcare organizations that follow this structured path reduce risk and build internal confidence. Staff understand how AI supports their work. Patients experience safer, more responsive care. Leaders gain better visibility into performance, planning, and long-term strategy.

At Brevity Technology Solutions, we help healthcare organizations design and implement AI systems in a structured and responsible way. From workflow audits to layered deployment and governance planning, our focus is on clarity, safety, and long-term success.

If you are planning your AI journey or want expert guidance on where to begin, you can book a free consultation call with our team. Let’s build a smarter healthcare system, step by step.

Related Post

-

F

-

A

-

Q

The first step is understanding your current systems. Before choosing any AI tool, healthcare organizations should review existing workflows, data quality, and technology infrastructure. Identify repetitive tasks, bottlenecks, and areas where data is already being collected but not fully used. Starting with administrative and patient experience workflows is usually the safest approach. It allows organizations to test AI in non-clinical areas before moving into more sensitive systems.

AI can be safe when implemented with proper governance and human oversight. Clinical Decision Support Systems (CDSS) are designed to assist doctors, not replace them. Final medical decisions must always remain with licensed professionals. Before using AI in patient care, systems should be validated, monitored regularly, and reviewed by clinical experts. Clear accountability and transparent documentation are essential.

No. AI is designed to support healthcare professionals, not replace them. It handles repetitive tasks, analyzes large volumes of data, and highlights potential risks. This allows doctors and staff to focus more on patient interaction and complex decision-making. Healthcare will always require human judgment, empathy, and accountability.

The timeline depends on the scope. Administrative AI tools can often be deployed within a few months. More advanced systems like clinical decision support may take longer because they require data preparation, testing, and governance approvals. A phased approach helps manage time and risk effectively.

AI systems require structured, accurate, and consistent data. This may include electronic health records, lab reports, imaging data, billing records, and patient history. Poor-quality data leads to unreliable outputs. That is why data cleaning and standardization should be completed before large-scale AI adoption.

Ethics should be addressed through formal governance policies. This includes:

• Protecting patient privacy

• Monitoring for bias

• Ensuring transparency in AI recommendations

• Maintaining human oversight

Many hospitals establish AI review committees to evaluate new systems and monitor performance regularly.

No. AI can be adapted to organizations of all sizes. The key is starting small and scaling gradually. Even smaller hospitals and specialty centers can benefit from AI tools in scheduling, billing, diagnostics support, and patient communication. The focus should be on solving real problems, not adopting technology for trend reasons.

Common mistakes include:

• Implementing AI without clear goals

• Ignoring data quality issues

• Skipping staff training

• Lacking governance oversight

• Expanding too quickly without pilot testing

Avoiding these pitfalls increases the chances of long-term success.

Success should be evaluated based on predefined objectives. For example, reduced appointment no-shows, fewer billing errors, earlier risk detection, or improved patient feedback. Tracking outcomes regularly ensures that AI systems continue to provide value and remain aligned with organizational goals.

At Brevity Technology Solutions, we guide healthcare organizations through every stage of AI adoption. We begin with workflow analysis and data assessment. We then design a layered implementation roadmap tailored to your environment. Our approach focuses on structure, safety, and long-term scalability. If you are ready to explore AI in your organization, book a free consultation call with our team and take the first step toward responsible AI transformation in healthcare.

Want to Scale

Your Business? Let’s Meet & Discuss!

CANADA

30 Eglinton Ave W Mississauga, Ontario L5R 3E7

INDIA

3rd floor Purusharth Plaza, Amin Marg, Rajkot, Gujarat. 360002

Get a Quote Now

Let's delve into a thorough understanding of your challenges and explore potential solutions together